From Cognition to Compression: Where Large Language Models End

Language gave LLMs everything it could — except the one who was speaking.

April 15, 2026

We all know that Large Language Models are trained on human language, and language is fundamentally a symbol system — what the symbols look like doesn't matter that much, what matters is the human cognition behind the symbol, which is also what LLMs statistically reconstruct. In this article, we'll start from human cognition and human language, work our way through the essence of large language models, and draw a map for the future of AI and humanity.

Human Cognition

Let me start with a question: how do we understand the world?

In traditional neuroscience, computer science, and the training of LLMs, there exists a common cognitive framework:

External signals enter the system, the system processes them, builds internal representations, then produces outputs. However, if we use this framework to understand how the brain works, it has a fatal flaw: it presupposes a clear boundary — the brain is inside the body, the world is outside, and the two are entirely separate.

In reality, that boundary is nowhere near as clean as we assume. The brain is not an isolated processor deployed into the environment. It is part of an organism that grew out of the environment — and has never operated independently from the world.

The Inseparability of Human Cognition and Environment

From an evolutionary perspective, every complex life form that exists today evolved from a single eukaryotic cell. Every step in that evolutionary process was a response to certain environmental pressure. Humans were not designed and then placed into the environment — we were sculpted by our environment. Every bone, every neural pathway, embodies a historical response to the world.

From the perspective of biological composition, all matter in our bodies come from the environment. Most of the atoms in your body were outside of it a few years ago, and will return to the environment in a few years. What stands between us and our environment is not an impermeable wall, but cell membranes — perpetually exchanging matter and energy with the outside world.

From the perspective of brain plasticity, our brain's structure is not determined solely by the genes we inherited through evolution — it is also continuously sculpted throughout development.

The 1981 Nobel laureates David Hubel and Torsten Wiesel demonstrated this with their experiments on kittens. They sutured shut one eye of a kitten during its critical period. The visual cortex corresponding to that eye received no input, and consequently lost the ability to respond to signals from it. Even after the sutures were removed in adulthood, the eye could no longer function normally1.

Phantom limb amputation is another classic example of brain plasticity. Some amputees continue to feel a phantom limb because the mapping from the once-existing limb to the somatosensory cortex is still active. But if the patient uses a mirror — exploiting its symmetry to let their remaining limb simulate the phantom one — through repeated practice, the brain gradually learns that the phantom limb doesn't actually exist. This achieves phantom limb amputation: visually deceiving the brain in order to rewrite its existing structure.

Beyond these examples, our social cognition, attachment style, language acquisition, and capacity for emotional regulation are all inseparable from the environments that shaped us during critical periods.

The physical structure of our brain is the product of innate genes (inherited through evolution) and our personal environmental history.

The environment doesn't just shape our brain structure — it also modulates how the brain operates. A key mechanism is neuromodulation.

Dopamine is one of the most famous neuromodulators. Others include acetylcholine, norepinephrine, and serotonin. All of them play profoundly important roles in our lives.

Dopamine: an error signal between reality and expectation. It encodes "better or worse than expected" — not "pleasure" as commonly assumed. When reality exceeds expectations, we feel elated; when it falls short, we feel deflated. Dopamine determines what gets encoded into long-term memory. Your dopamine level right now is deciding which parts of this article you'll remember.

Acetylcholine: controls brain plasticity. In a high-acetylcholine state, incoming signals from the outside world override the brain's existing patterns — we enter a learning mode, more open to new information, more capable of forming new understanding. In a low-acetylcholine state, the brain relies more on the established patterns, shifting into retrieval mode. The very same information is processed in entirely different ways depending on the acetylcholine state.

Norepinephrine: a signal-to-noise ratio marker. It primarily supports the body's fight-or-flight response. When it's high, your response to strong stimuli is amplified — if you are anxious, you become more sensitive to anxiety-related signals, and your thinking becomes more focused and selective. When it's low, the brain's associative capacity increases, making cross-domain connections easier — which is why relaxation sometimes sparks greater creativity. The same environment, under different levels of norepinephrine modulation, produces strikingly different responses in different people.

Serotonin: The emotional tone with which you meet the world. It doesn't shape any specific emotion — it shapes the overall affective coloring of your experience. At high serotonin levels, our baseline emotional tone is neutral to positive: we can wait, tolerate delayed rewards, and sit with uncertainty. At low levels, the world feels more urgent, more threatening, and we become more prone to impulsive action. Faced with the same environment, different serotonin levels give us fundamentally different perceptions, which in turn give rise to fundamentally different behaviors.

Whether we approach it through evolution, biological composition, brain plasticity, or neuromodulation — humans and their environment are tightly coupled. More than that: humans are part of the environment, part of the physical world, and cannot exist in separation from it. Karl Friston's framework goes even further2: the brain is not a passive receiver of the world's signals — it continuously generates predictions about the state of the world, which are then corrected by reality. What we perceive is not the world itself, but our latest hypothesis about it.

At the same time, the same experience — under different neuromodulatory states — produces entirely different mental states, different modes of brain operation, different emotional experiences of the world. Each person's internal response to the same event is, mechanistically, destined to be different.

The Cornerstone of Modern Human Civilization: Abstraction

But it's not only human cognition that is inseparable from the world. Every living organism's inner experience is, like ours, tightly coupled with its environment. So what allowed humans to surpass all other species and develop an unprecedented civilization? The answer is our capacity for abstraction.

What is abstraction? It is reusable concepts we extract from different instances of things. "Apple" is a reusable concept extracted from different instances of apples. "Red" is a reusable concept extracted from all the red objects in reality. "Warm" was originally a reusable concept extracted from objects whose temperature was higher than our own.

How do abstractions form? Our ancestors, like most other species, received raw sensory signals from the environment, underwent organic biochemical reactions in response that manifested as certain behaviors. See a predator — heart rate spikes. Smell food — saliva flows.

But as the brain processed these raw sensory signals, it began to automatically detect regularities. This is where the lowest level of abstraction begins: the same shape appears repeatedly — the brain starts recognizing "circle". The same color appears repeatedly — the brain starts recognizing "red". We synthesize regularities, then perceive the world through them. When we see half of a roughly circular object, the brain is already predicting "circle" before perception is even complete.

Beyond building abstractions from sensory signals, the brain also builds abstractions from interactions between the body and the world. Take the concept of "grasping" — our ancestors lived in trees, making grasping an extremely common action. It engages many brain regions: the premotor cortex handles motor planning; the posterior parietal cortex translates visual processing results from the occipital lobe into precise movement parameters; the primary motor cortex issues motor commands; the cerebellum performs real-time calibration; the primary somatosensory cortex provides tactile feedback on the action — and so on. From this cross-modal neuro-activation, the brain starts to build an abstraction of the idea of "grasping". It is entirely embodied — without the human body's structure and its corresponding neural interactions, the brain could not possess the abstract concept of grasping.

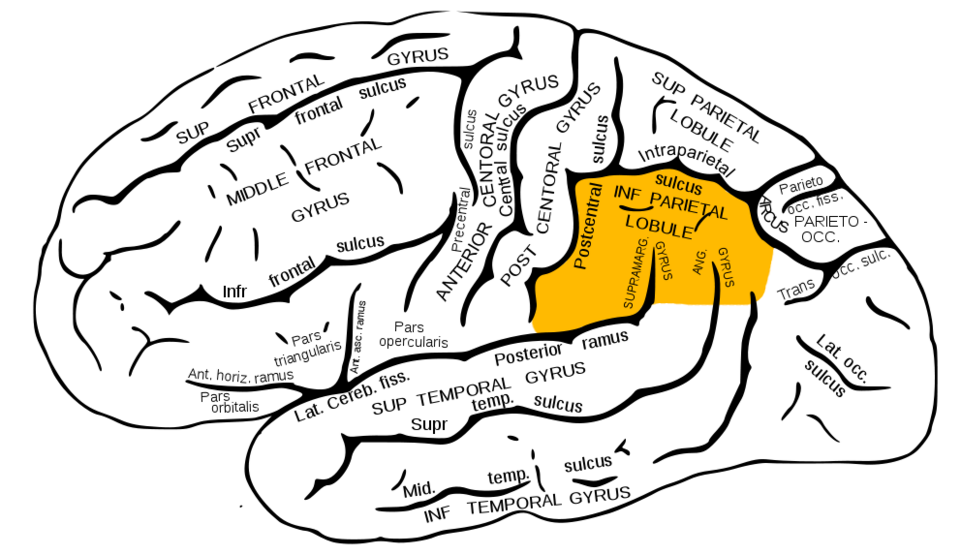

Our ability to form different abstractions owes much to the evolution of a particular brain region: the inferior parietal lobule (IPL), shown in the figure below. Part of the parietal lobe, it sits at the crossroads of vision (occipital lobes), touch (somatosensory cortex), and hearing (temporal lobes), integrating and processing sensory signals from all modalities3.

The parietal lobe's core function is to build a multimodal understanding of the corporeal self and the surrounding world by processing tactile, muscular, and proprioceptive signals from the body. Over the course of human evolution, the parietal lobe grew significantly larger — especially the inferior parietal lobule. As the IPL expanded, a large portion of it differentiated into two new regions: the angular gyrus and the supramarginal gyrus. These two regions are unique to humans; even where similar structures exist in other species, they are far smaller and simpler[^1].

Of the two structures that differentiated within the IPL, the left angular gyrus is arguably the key structure behind human civilization. It is intimately linked to some of humanity's most distinctive abilities: mathematics and language — that is, our capacity for abstraction. It is the renowned multimodal semantic association area, where signals from different modalities converge and are processed. If your left angular gyrus were damaged, you would lose the uniquely human capacity for abstraction: reading, writing, mathematics. You would be unable to understand metaphor, only its literal meaning. For instance, "this person is warm" — you could understand every word, but you would fail to grasp its social-emotional meaning.

In other words, the human capacity for abstraction evolved from the parietal lobe — the brain region responsible for integrating multimodal information about the self and the environment. The abstractions we build are inseparable from our bodies, our surroundings, and the multimodal interactions between the two. Beyond the grasping example, there are many others: we say an argument "stands" to describe its rigor; we say "heavy" to describe a psychological burden; "warm" to describe someone's personality; "bitter" to describe a life full of regret.

As humans gradually built abstractions about the world, something profoundly important happened: language evolved to name them. And naming, in turn, sharpened and reinforced the boundaries of those abstractions. Once an abstraction was fixed through naming, it could be passed from mouth to mouth, from generation to generation. As for why language evolved in the first place — it is inseparable from our social nature. The need to communicate gave rise to the tools for communication

And so people could build new abstractions on top of existing ones. Once we had the abstractions for "circle" and "rectangle," the abstraction "rounded rectangle" became possible. Once we had abstractions for the numbers 1, 2, 3, we could learn the basic operations of addition, subtraction, multiplication, and division. Without arithmetic abstractions, there would be no algebra; without algebra, no calculus, no partial differential equations, no higher mathematics. The reason we can think about advanced mathematics today is not that we are smarter than our ancestors — it's that we stand on the abstractions they left behind. Each generation learns what the previous generation built, and builds new abstractions for the next.

There is a key mechanistic detail here: higher-level abstractions do not need to be traversed layer by layer when activated. To invoke the abstraction "justice," we don't have to start from the first layer — it can be activated directly, without conscious intermediation. But its activation is not fully equivalent to "red," because "red" is grounded in basic perception — its activation path is shorter, faster, more direct.

Once formed, abstractions reshape how we perceive the world. When you learn a new word as a child, suddenly you see it everywhere — not because the word appeared more often, but because your brain can finally recognize it. Our perception is filtered in real time by the abstractions we've acquired. And we cannot "forget" an abstraction the way we delete a file. Once we know something, we can never again see the world through unknowing eyes.

To summarize this section in two points: First, human cognition is, mechanistically, unavoidably individualized. This is what the philosophical concept of qualia tries to capture: that non-transferable, first-person, subjective experience. Second, from individualized embodied experience, humans construct abstractions. These abstractions fall into three categories:

High structural universality, low individuation: Numbers, logic, geometry — these concepts are highly similar across individuals because their formation is independent of personal embodied history, narrative, or emotional experience.

Low structural universality, high individuation: Grief, home, love — these take a different shape in every person's mind, deeply sculpted by individual embodied experience. Language can only point toward them; it can never fully transmit them. When you say "I'm sad," what I understand is my own sadness.

Mixed structural universality and individuation: Most abstractions fall here — apple, fairness, danger. They have enough shared structure for communication, but enough individual variation that complete transmission is never possible.

Human Language

The function of language is to build bridges between the different abstraction systems of individual humans.

Language is not the richest channel for transmitting information. In face-to-face communication, facial expressions, body language, and tone of voice all carry rich information. We often say "the eyes are the window to the soul" — sometimes a single glance lets us read someone's inner state in a profoundly rich and accurate way that no amount of words could capture.

But for the development of civilization, what matters more is not the richness of information, but its persistence, reusability, and scalability. Once an abstraction is stored in language, it persists in human civilization almost permanently. The next generation first aligns its own cognition with the existing language then builds further from there. At the same time, language enables consensus at massive scales — law, science, government, all essential components of large-scale modern society, cannot exist without it.

So when two individuals communicate through language, what's happening in their brains?

Uri Hasson, a neuroscientist at Princeton University, and his team conducted an experiment: they had a speaker tell an unrehearsed real-life story while scanning her brain with fMRI, then played the recording to 11 listeners while scanning theirs. The results were surprising — genuine neural synchronization appeared between speaker and listeners. Not just statistical correlation — measurable spatiotemporal coupling4.

Two details are especially revealing:

This neural synchronization disappeared when communication failed. When listeners couldn't understand the speaker, the synchrony broke. This shows that neural coupling isn't automatically triggered by language — it only appears during genuine understanding.

Listeners who understood best exhibited a special phenomenon: certain brain regions activated before the speaker said the corresponding content — their brains were actively predicting what would come next.

In other words, understanding is not passive reception — it is approximate reconstruction. When you hear me say "apple", you reconstruct your "apple" in your brain using your own abstraction system. What passes between two brains is not information transfer — it is the synchronization of activation patterns. And this synchronization depends on shared background, shared experience, and shared abstractions — without sufficient common ground, synchronization cannot occur.

This means that even the most complete human-to-human communication is never a complete reconstruction. What we achieve is getting the other person's brain to activate a sufficiently similar pattern.

So what does language actually transmit? It transmits the greatest common divisor of what our brains can synchronize: the discernible, mutually understandable portion. What gets lost is the individual, the unrepeatable, the part anchored in qualia. When I say the food is too salty, you understand what that means — but the specific taste of "saltiness" on my tongue, the particular experiences of "salty" I carry with me, you will never experience. Language doesn't have that channel.

Every sentence, at its core, is an act of lossy compression. Before you speak, your brain is in a multimodal neural activation state, backed by your rich inner experience. But once spoken, it becomes merely a mapping onto the existing language inventory — a greatest common divisor. This loss is not accidental. It's not because our vocabulary is too small or our expression too imprecise. It is structural and directional. A certain type of information is systematically stripped away in the mapping from rich bodily and neural states to language.

The Boundaries of Large Language Models

We know that large language models are trained on human-generated text, with the objective of predicting the next token. What they statistically reconstruct is the human cognition that language represents. More specifically: the named abstractions within the human brain. Even at 100% fidelity, what the model can recover is only the portion of the neural state that has been named, recorded, and is amenable to neural synchrony.

But if we underestimate what LLMs can do, we'd be making a serious mistake. As we've discussed, these named abstractions are the cornerstone of human civilization. Let me emphasize once more:

They make communication possible. Law, money, religion, morality — the concepts that form the core of civilization are essentially shared imaginaries constructed through language. Without shared named abstractions, none of these exist, and neither does modern human society.

They make the continuity of civilization possible. Einstein didn't have to reinvent geometry. Each generation builds on the named abstractions left by the last — this is one of humanity's most powerful capabilities as a species.

They are our shared toolkit for thought. They let us talk about things that don't exist — the future, justice, entropy — and invent new things based on them. In a certain sense, the boundary of named abstractions defines the boundary of our thinking.

Through training on massive text corpora, LLMs have statistically encoded nearly all of humanity's named abstractions, along with the complex relationships and topological structures among them. Not some domains, not some languages — all of them. This includes entirely opposing viewpoints, theories, and human behaviors.

For the first time in human history, an entity has encoded an entire civilization into a unified parameter space. This also makes it unprecedentedly powerful at many related tasks:

Cross-domain knowledge synthesis: A cardiologist would need weeks (if not more) to read the fluid dynamics literature, then weeks more to think about its relationship to cardiology. A large language model has no such time constraint. Its cross-domain synthesis ability doesn't come from being smarter than experts — it comes from being the convergence point of all expert knowledge.

All language-related tasks: Translation, summarization, style transfer, rewriting, dialogue generation... the list is virtually endless, because the model's training objective inherently requires it to internalize grammatical structure, semantic relations, pragmatic rules, stylistic conventions, and cross-linguistic correspondences — all of these are byproducts forced into existence by a single objective.

Formal reasoning within known concept spaces: Deriving conclusions within a realm where rules have been thoroughly defined and extensively documented. The most widely applied example today is code generation. Programming languages have strict syntactic rules, algorithms have explicit logical structures, and common problems have established solution patterns. The content has been discussed, annotated, and debugged in the training data in enormous volume. The model has internalized the rules and performs effective pattern matching within this space. But the boundary of its reliability is precisely the boundary of the known concept space: once a problem requires genuine understanding of the trade-offs behind business logic, or involves truly novel architectural design, performance drops significantly.

In these tasks, you don't need any specific individual's embodied experience, you need shareable, expressible, structural knowledge. LLMs excel at these things because they are, by nature, a high-fidelity mirror of exactly this kind of knowledge.

But there lies a fundamental issue. The corpus was already lossy before training even began. Every person who generated training data compressed their rich inner experience into named abstractions, and those named abstractions, when transmitted through language, retained only the portion synchronizable between brains.

What a large language model learns is a statistical reconstruction of a signal that has already been severely compressed, a static projection of a vivid, living process. Real, but dimensionally reduced. The thing that cast this projection? The model has never touched it. This is a structural absence. More specifically: LLMs inherited the concepts, but never had the subject behind those concepts — a living being that truly interacts with its environment and possesses its own unique inner experience.

Can more data, more modalities, or better alignment methods solve this problem? I believe they cannot.

More data: The dimensions missing from language are not missing because of insufficient data — language structurally cannot transmit certain signals. You cannot aggregate a dimension that doesn't exist. More text is just more instances of the same directional lossy compression.

More modalities: The missing dimension is not perceptual — it is embodied. Even adding video, audio, and images only provides more data about the world — what cameras captured, what microphones recorded. These signals are still "descriptions of the world," not "being in the world."

The relationship between humans and the world is fundamentally different from that of LLMs. As we discussed in Section 1.1: humans are part of the environment, made of the same substance as the world, interacting continuously and in real time. Every decision you make has real consequences; every failure you endure truly happens to you. This is not data input — this is real stakes.

It is precisely this "being part of the world" that gives humans a form of creativity that LLMs structurally lack. An LLM's "creation" is recombination within the space of existing patterns — its output, however sophisticated, is some function of the training data. Human creation can grow from real encounters — a thought that has never appeared in any corpus, because it arose from a specific person at a specific moment in a real collision with the world.

This dimension cannot be solved by adding more modalities.

Better alignment methods: Reinforcement learning adjusts probabilities within the existing parameter space but does not rewrite the structure of the space itself. Pretraining is, in both scale and depth, beyond the reach of any subsequent fine-tuning. The model's foundational understanding of the world is established during pretraining; everything that follows is decoration, not renovation.

What if we give the model memory, tools, and autonomy — turn it into an agent — would that solve the missing dimension?

From my observation of current agents, they inherit the same absence. An agent's memory and capabilities are language-based; they don't fill in the missing dimension. Agents amplify human power, but the source of that power remains human judgement anchored in qualia. The agent is the lever; the force still comes from the person holding it.

But I want to be honest: could a sufficiently long-lived, embodied agent with real stakes develop something resembling a subject? This is what I consider the most valuable open question. I don't have the answer. But the answer to this question will determine the trajectory of the future.

The Future / Being Human

For the long-term relationship between large language models and humans, there is what I consider the most promising direction: agents cannot fully replace humans — they will become humanity's most powerful assistants. Their existence is more like an extraordinarily powerful engine with no sense of direction — the power is real, but the direction depends entirely on the person driving it.

Of course, that immense capability combined with autonomy also poses significant risks to society — something I'll unpack separately in a future article. Today I want to focus on the collaborative model between humans and large language models.

In a future where humans and LLMs coexist, each has an irreplaceable core domain: LLMs command humanity's shared, expressible, named knowledge and reasoning; humans possess what cannot be replicated — individuality rooted in embodied experience, inner life, and a life story that belongs to no one else.

More concretely, a large language model functions as an amplifier. It operates within known territory — rearranging and recombining named abstractions with astonishing speed and scale. Programming logic, musical styles, writing structures, these are fundamentally new arrangements of existing patterns. Given existing languages, frameworks, and algorithms, AI's capacity within this space is beyond any human's reach. But it cannot expand the territory itself.

Humans, by contrast, are the navigators. True creation is forming an abstraction that didn't previously exist — a new cross-modal binding, born from a specific person's real experience at a specific moment in their life. Not recombination, but origination. AI art is sophisticated, but it is a blend of existing styles. Human artists create new modes of perception themselves.

Ultimately, direction comes from the human, from a person with a subject, a person who knows what they truly want. AI amplifies this force. But the source of the force is in the person. This is what I consider the most important design principle for all AI products: confront AI's structural absence head-on. Don't try to fill every gap with AI, especially those that fundamentally require human qualia-anchored judgement. Doing so wastes AI's strengths and erodes what is most uniquely human.

Education is a prime example. Many AI education products are doing this: making every learning pathway AI-driven, so students never need to truly interact with teachers or classmates, they can "learn efficiently" just by interacting with an AI system.

But learning is not just information transfer. A great teacher's impact on students comes from their genuine passion, their real confusion over a question, their very presence as a human being, these are qualia-anchored and cannot be replicated. Filling the entire educational process with AI optimizes efficiency but cuts off something far more important: the kind of interest and curiosity that can only be sparked by a real person. AI's best position is to free teachers from repetitive content delivery, leaving more time for the things only humans can do.

But the "human as navigator" framework presupposes something: that the human already knows where they're going, already has a clear direction, and AI merely executes. This presupposition itself sidesteps a more fundamental question, one that I believe is the deeper significance of LLMs: they force us to answer: what does it mean to be human?

We live in an industrialized, technologically driven society. Under the social structure of capitalism, each individual is imperceptibly reduced to a tool within the system: work occupies the majority of people's life, the content of work is to fulfill organizational objectives, and in some hyper-competitive societies, a person's worth is defined entirely by their ability to achieve goals. Efficiency is the supreme principle; instrumental rationality is the dominant logic.

And now, there's an entity that can achieve goals faster, more accurately, and more tirelessly. If a person's value derives from their ability to complete tasks, then when a more powerful tool arrives, what meaning does that person's existence still hold?

This question is unsettling not because the answer doesn't exist, but because we haven't seriously asked it in far too long, or perhaps the influence of the environment has been so overwhelming that we've fully internalized the value system it imposes on individuals.

We arrive in this world as a living being, at a specific time, in a specific place, experiencing all of it through a specific body — growing, being hurt, loving, losing, creating, being confused. These experiences are not a means to some end. They are the purpose itself.

A tree doesn't exist to produce timber. It sways in the wind, grows in the sunlight, that, in itself, is what it means to exist.

The same is true for humans. We are not born to be more efficient at reaching goals. We are sentient beings, living in a real world, engaged in real, unrepeatable interactions with it. The experiences born from these interactions — joy, pain, confusion, epiphany — each one is unique, each one is irreplaceable, each one belongs only to you.

The arrival of LLMs is not a merely threat to humanity, it also provides a mirror. It reflects back the misunderstanding we've long held about ourselves: that we turned ourselves into tools, and then feared being replaced by a better tool.

The real question was never "what can AI do?" It has always been "What kind of life do we want to live?"

Footnotes

-

Hubel, David H., and Torsten N. Wiesel. "The period of susceptibility to the physiological effects of unilateral eye closure in kittens." The Journal of physiology 206.2 (1970): 419-436. ↩

-

Parr, Thomas, Giovanni Pezzulo, and Karl J. Friston. Active inference: the free energy principle in mind, brain, and behavior. MIT Press, 2022. ↩

-

Ramachandran, Vilayanur S. The tell-tale brain: A neuroscientist's quest for what makes us human. WW Norton & Company, 2012. ↩

-

Hasson, Uri, et al. "Brain-to-brain coupling: a mechanism for creating and sharing a social world." Trends in cognitive sciences 16.2 (2012): 114-121. ↩

Google engineer with a background in Knowledge Graphs and Entity Linking research at Tsinghua University. Her work explores the intersection of AI, language, and human meaning.